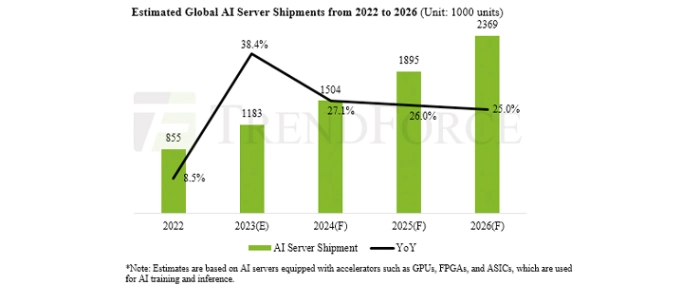

Global AI server shipments predicted to increase 40% in 2023

TrendForce forecast a dramatic surge in AI server shipments for 2023, with an estimated 1.2 million units – outfitted with GPUs, FPGAs, and ASICs – destined for markets around the world, marking a robust YoY growth of 38.4%.

This increase resonates with the mounting demand for AI servers and chips, resulting in AI servers poised to constitute nearly 9% of the total server shipments, a figure projected to increase to 15% by 2026. TrendForce has revised its CAGR forecast for AI server shipments between 2022 and 2026 upwards to an ambitious 22%. Furthermore, AI chip shipments in 2023 are slated to increase by an impressive 46%.

TrendForce analysis indicates that Nvidia's GPUs currently dominate the AI server market, commanding an impressive 60–70% market share. Hot on its heels are ASIC chips, independently developed by CSPs, seizing over 20% of the market. Three crucial factors fuel Nvidia's market dominance: First, Nvidia's A100 and A800 models are sought after by both American and Chinese CSPs. Interestingly, the demand curve for 2H23 is predicted to gradually tilt towards the newer H100 and H800 models. These models carry an ASP that’s 2–2.5 times that of the A100 and A800, amplifying their allure. Furthermore, Nvidia isn’t resting on its laurels; it’s proactively bolstering the appeal of these models through aggressive promotion of its self-developed machine solutions, such as DGX and HGX.

Second, the profitability of high-end GPUs, specifically the A100 and H100 models, plays a vital role. TrendForce research suggests that Nvidia's supreme positioning in the GPU market allows for a significant price variation of the H100, which could amount to a stark difference of nearly USD 5,000, depending on the buyer’s purchase volume.

Lastly, the pervasive influence of ChatBOTs and AI computations is predicted to persist, expanding its footprint across various professional fields such as cloud and e-commerce services, intelligent manufacturing, financial insurance, smart healthcare, and advanced driver-assistance systems as we move into the latter half of the year. Simultaneously, there’s been a noticeable upswing in demand for AI servers—particularly cloud-based ones equipped with 4–8 GPUs and edge AI servers boasting 2–4 GPUs. TrendForce forecasts an annual growth rate surprising 50% in the shipment of AI servers armed with Nvidia's flagship A100 and H100 models.

TrendForce reports that HBM, a high-speed RAM interface deployed in advanced GPUs, is poised for a significant uptick in demand. The forthcoming H100 GPU from Nvidia, slated for release this year, is equipped with the faster HBM3 technology. This improvement over the previous HBM2e standard amplifies the computation performance of AI server systems. As the need for cutting-edge GPUs—including NVIDIA’s A100 and H100, AMD’s MI200 and MI300, and Google’s proprietary TPU—continues to escalate, TrendForce forecasts a striking 58% YoY increase in HBM demand for 2023, with an estimated further boost of 30% expected in 2024.

For more information visit TrendForce.

.png)